Semaphore recommends all NGS clinical diagnostic labs adopt comprehensive software testing to reduce errors, improve efficiency, and save money. The right approach and the right amount of testing ensure the lab’s software systems operate correctly and can be easily maintained in the future.

Whether your lab is implementing an entirely new software solution, integrating two or more systems, or upgrading an existing system, it’s best to consider testing at the beginning of the project so that the software is built with project goals in mind.

Some of the questions labs ask include:

- What types of testing will be most effective?

- Can any of the testing be automated?

- What proof of testing is necessary?

- If proof is needed, will the test management system automatically capture it?

- How can these tests be used to help validate the system?

Software testing is a necessary and invaluable part of software development

The key benefits of software testing are:

- Supports quality assurance (QA). Labs need to know if a change in the system inadvertently breaks a component, integration, or system flow. Good integration and end-to-end coverage increase confidence when large changes to the system are required. In conjunction with developer-built testing, a robust QA cycle allows for a higher cadence of development output with lower overall QA/regression effort.

- Enhances security. Any security aspects of the system need to be thoroughly tested during development phases and after release to ensure sensitive or vulnerable pieces of the code are secure. Without testing, you can’t be sure that you haven’t introduced a security vulnerability that could open the door to a cybersecurity breach.

- Leads to cost savings. Identifying errors early on in the development process lets you save time and money compared to discovering issues later in the pipeline. Even better, it minimizes the risk and potential embarrassment of customers discovering these errors.

- Improves end-user satisfaction. If the software is difficult to use and does not accomplish its primary goals, the impact on your skilled employees can be dramatic. End users who are satisfied with a product and can use it with minimal frustration are much more likely to adopt a product or solution and continue using it.

- Makes it easier to earn certification, accreditation, and FDA approvals. Testing is an important element in all levels of certification and approvals. It provides evidence of a robust software architecture designed to prevent errors and failures in the long term.

Types of software testing

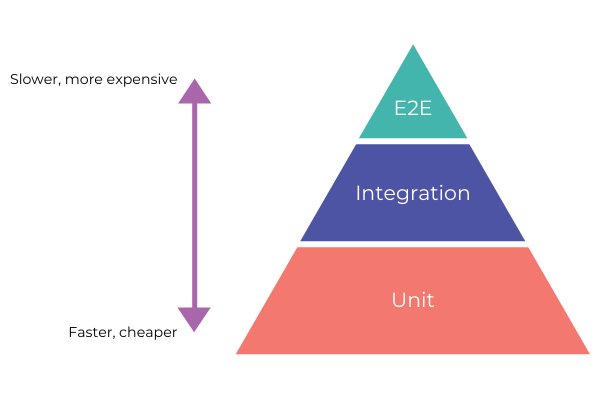

Software testing is the practice of investigating and measuring the quality of software. Depending on the specific type of testing, it can be performed manually or automatically. In the case of laboratory software, each level of testing adds value to the software implementation, but not all to the same degree or with the same effort.

Tests are typically categorized as functional or non-functional. Functional tests map to stages of software development such as unit, integration, system, or end-to-end testing while non-functional tests may include performance, load, stress, volume, and security testing. Due to the varied nature of non-functional testing and the fact that non-functional tests often fall outside the standard development process, we have focused on the functional test definitions below.

Unit testing

Unit testing refers to the testing of individual units or components of a system. Each unit is validated or tested separately, without considering dependencies on other units. Unit tests should be created during the development of the code under test and are generally run automatically via build systems to ensure stability when changes are introduced. Unit tests help to preemptively find and diagnose issues and provide an up-to-date picture of the health of your system.

Integration testing

Like with unit testing, integration testing can be run automatically via build systems to ensure stability. However, rather than testing units or components independently, integration testing investigates how the units work together when integrated with other components. Units are combined and tested as a group to ensure successful integration. The test artifacts can be saved to show the system’s health over a period of time or to help document the system’s reliability.

System testing

System testing is performed on the entire software system, including any integrations and dependencies. It’s used to prove that the system meets acceptance criteria and to verify compliance with regulatory or system-defined standards. These tests can be automated for ease of use or run manually. Various approaches exist to document and track these tests for future reporting needs.

This level of testing may use mocked-up integrations when live interactions are not possible. It’s a good check to do prior to end-to-end testing or user acceptance testing when end users may be involved, and all system components should be active for review.

End-to-end (E2E) testing

In end-to-end testing (sometimes referred to as regression testing), the flow or functionality of an application is validated from start to finish. This often involves testing multiple systems or components to ensure all areas of system operation are working consistently and correctly. Although it can be automated, it almost always requires an element of human testing and, as with system testing, its value lies in verifying system compliance.

During E2E testing, every part of the system should be thoroughly exercised in a manner as close to the expected production functionality (including infrastructure) as possible. Limitations in testing environments may require the generation of test data or system integrations, but staying as true to production as possible is very important to avoid surprises once the system is deployed.

User acceptance testing

User acceptance testing is often the final component of verification before new software is deployed. This process is typically handled by end users in conjunction with software teams and should focus on user interactions within the system. A software system that offers less functionality or worse, has incorrect functionality, is unlikely to be widely adopted by users. Similarly, a system that functions correctly but is frustrating to use will have a low adoption level. This level of testing is a great way to have end users sign off on a product before their mandated usage, and helps build confidence in a system prior to deployment.

Automated vs. manual testing

Automated testing is often seen as the best approach for testing coverage and efficiency. It’s a great choice for tests that are repeatable and have clear outcomes or results. Also, it offers an easy solution for identifying current project or development health because automated tests can be run more often without the associated human cost.

However, automated testing is not without limitations. It often requires more investment upfront than manual testing. It’s best built at the same time as a product is developed rather than added in the future. And it requires maintenance. It’s not a solution you can create and leave to succeed on its own. You must update it regularly during development to ensure coverage is complete.

Manual testing, on the other hand, (especially of an exploratory nature) can lead to finding issues missed by code-based testing. For instance, issues related to user interactions can be difficult to identify using automated tests because they may not emulate user activity thoroughly.

Despite these benefits, manual testing may require a greater investment long term because repeated testing requires repeated human interaction. It can also present issues when tests are not documented and run consistently between test cycles (or users). Re-running the same tests manually over and over again can also lead to complacency in results verification, which might be disastrous over time.

Most of the previously defined types of testing can be automated for better consistency, to save time and labor, and to support more frequent software updates. We recommend the use of automated tests to:

- Avoid the potential risk of errors associated with manual testing.

- Ensure testing is repeatable—automated tests do exactly what you code them to do every time.

- Eliminate repetition.

- Provide consistency across releases.

- Increase the speed of testing.

- Reduce the need for human intervention and resources.

- Reduce the cost of testing software updates.

Automated testing might appear to be a significant investment initially, but it offers a high return on investment (ROI) in the long run. Labs that implement automated testing save time and effort, and can address errors more quickly, as opposed to having to re-visit code areas in future development cycles. If you want to ensure your software development life cycle and lab processes run as efficiently as possible, finding the right balance between manual and automated testing is crucial.

Factors to consider

Software testing covers many different areas. During a standard development process, feature testing and verification should be embedded in the development cycle. Whether these tests are automated or manual, having them integrated with the development process ensures that code is exercised as close to the time of creation as possible. The goal is to minimize the effort required to fix any issues identified. Independent of the regular development cycles or sprints, testing also plays a large role in system health and project confidence over time.

Software testing development effort should reflect an approximate 70/20/10 split—with the majority of effort given to unit and integration testing (see the Test Pyramid below). These areas provide a constant source of low-level health checks in the system and can be added during regular development cycles. System and E2E tests should make up the least amount of testing within the system but may take more time to execute and prepare than the rest of the testing combined. Their value is still extremely high, as they are often the final verifications before deployments or user interactions.

Software testing development effort should reflect an approximate 70/20/10 split.

Apart from considering the effort involved in developing the tests, labs also need to ensure they have the proper resources to implement and maintain software testing. Developers should follow best practices to write and debug test scripts. It’s also important to remember that it’s not enough to simply write the tests—they must be maintained and run whenever there is a change to the codebase. Labs shouldn’t be complacent about testing. While time-consuming, testing is a critically important part of developing robust software systems.

Now is the time to implement good software testing practices

It’s easier and significantly more cost-effective to build in testing from the beginning of a software project rather than after the fact. This does not mean that building testing into software already written is not time well spent, but the cost may be higher than the initial investment approach. Well-written tests help define and clarify system behavior and result in more robust software, while good test coverage enables developers to confidently update software at any point in the system’s life cycle. If you work with us, you can be assured that good software testing practices will be a pivotal part of any project in which we are involved.